The Missing Layer Between AI Pilots and Enterprise Scale

Models can read the documents. They still need the context to navigate the real organization.

Enterprise AI is finally moving from experiments to production. But as models, tools, and agents spread through organizations, one gap keeps showing up: the AI still does not understand how the organization actually works.

Deloitte’s 2026 enterprise AI report captures the moment well. AI access is rising, more experiments are reaching production, and agentic adoption is accelerating. But activation still lags, and governance remains immature.

Enterprise AI is scaling, but not cleanly.

Access is rising: worker access to sanctioned AI tools grew from under 40% to under 60% in a year. Activation is lagging: among workers with access, fewer than 60% use AI in their daily workflow. Production is still limited: only 25% of respondents say 40% or more of their AI experiments are in production today, though 54% expect to reach that level within three to six months. Agentic adoption is coming fast: 74% of companies plan to use agentic AI at least moderately within two years. Governance is behind: only 21% report having a mature governance model for autonomous agents.

That pattern matters because the next phase of enterprise AI is not about whether models work. It is about whether AI can function reliably inside real organizations.

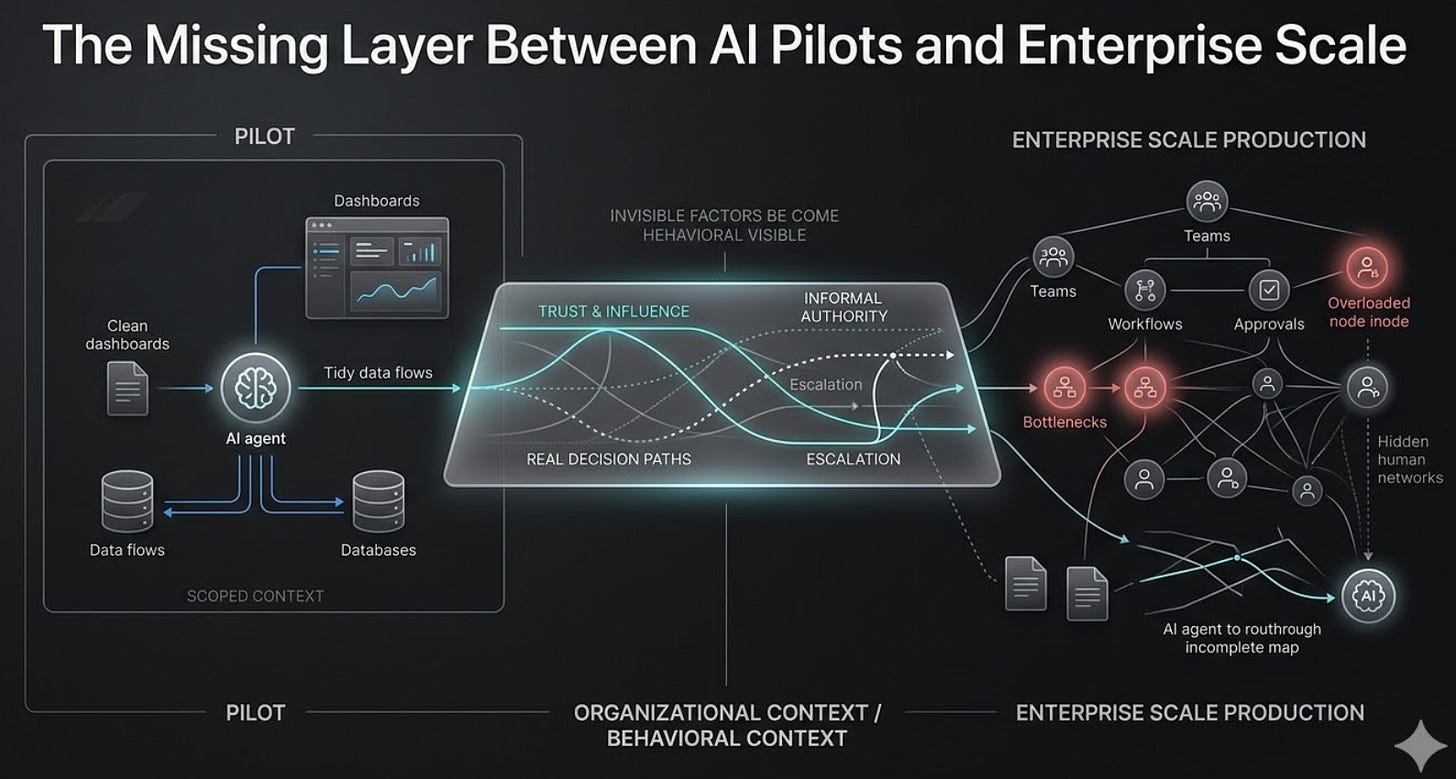

A pilot can look impressive in a clean environment. It runs with a small team, scoped data, limited stakeholders, and fewer consequences. Production is different. Production requires integration with existing systems, security reviews, compliance checks, monitoring, maintenance, and ongoing operational ownership. It also exposes the realities pilots can hide: edge cases, coordination problems, conflicting priorities, and the harder work of scaling what succeeded in isolation. Deloitte calls this the proof-of-concept trap.

I would add one more reason pilots stall: organizational context.

Most enterprise AI systems today are built on two layers of data.

Tier 1: Structural — org charts, titles, reporting lines

Tier 2: Transactional — documents, tickets, messages, meeting notes

These layers matter. They tell AI what the official organization looks like and what information has been recorded. But they do not tell AI how decisions actually move in practice.

That missing third layer is behavioral context: who people trust, who really makes the call, where work actually gets escalated, whose approval matters under pressure, which workflows exist on paper versus in reality, and when someone may be formally responsible but practically unavailable.

A recent essay from LEAD’s BehaviorGraph project makes this distinction explicitly: most enterprise AI categories still operate on structural and transactional data, while real decisions often live in a third behavioral tier. That is why so many systems are technically correct and operationally wrong.

An AI agent retrieves the right policy document. But the real decision path shifted six months ago because a trusted legal counsel or staff engineer became the true checkpoint.

An AI routes a request to the right person by title. But that person is overloaded, politically peripheral, or no longer the one others actually defer to.

A drafting tool proposes the right message. But the account is sensitive, and the outreach needs to go through a specific internal sponsor.

In each case, the content may be correct. The action is still wrong.

That is the kind of failure the next generation of enterprise AI has to solve. Not just whether the system can retrieve, summarize, generate, or classify. But whether it can operate with enough awareness of human dynamics, decision paths, and organizational reality to act appropriately.

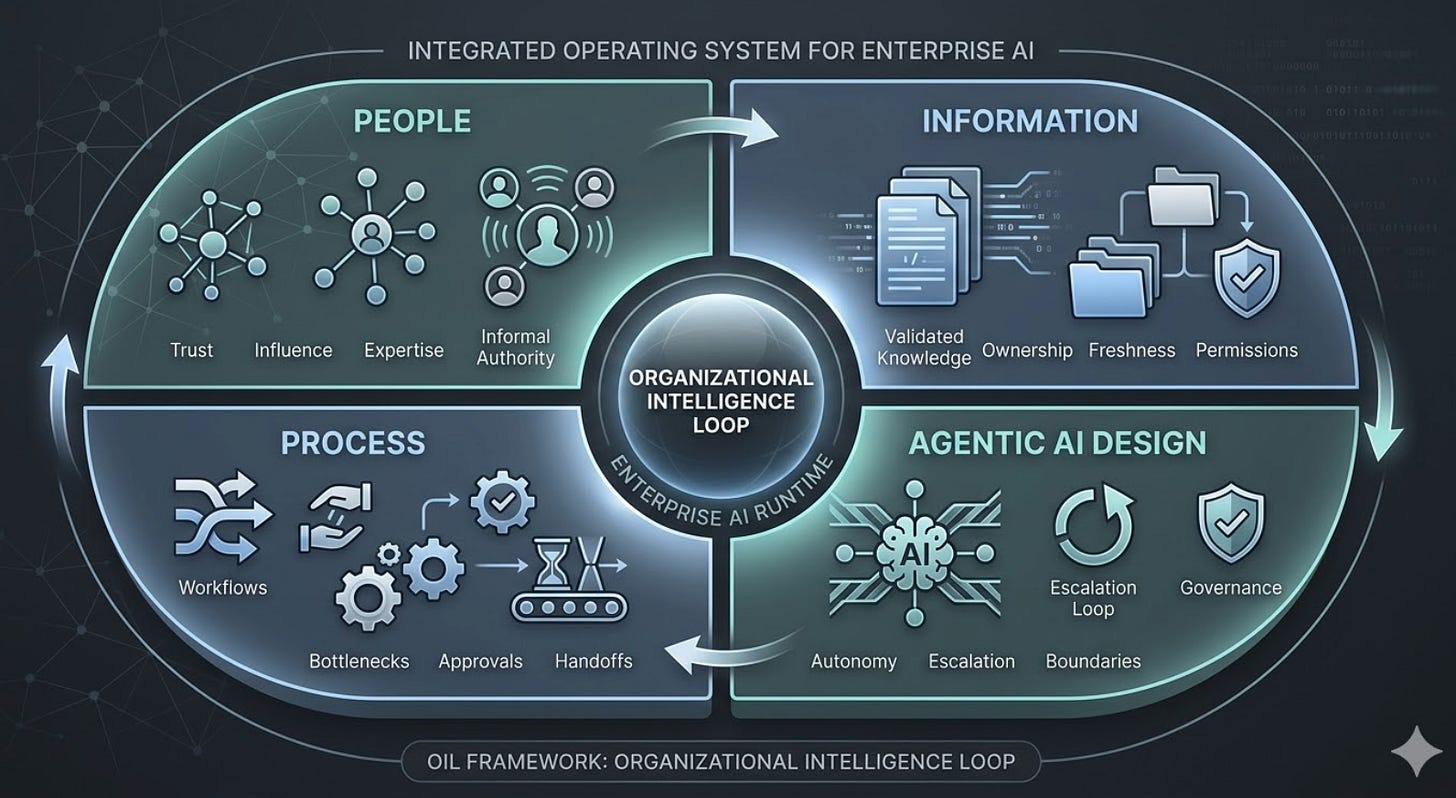

My research calls this the Organizational Intelligence Loop, or OIL: a framework for what enterprise AI needs in order to operate inside a real organization.

People — who knows what, who is trusted, who influences outcomes

Information — what is current, owned, validated, and permissioned

Process — how work actually flows, where bottlenecks form, where decisions stall

Agentic AI Design — what an agent is allowed to do, when it should escalate, and how governance is embedded into action

The point is not that enterprise AI lacks intelligence in the abstract. The point is that most systems still lack the right operating context.

Information alone is not enough. A system can retrieve the most relevant document and still fail because the document is outdated in practice. Process alone is not enough. A workflow can be mapped correctly on paper and still fail because it misses the informal checkpoint that actually determines whether work moves forward. Even governance alone is not enough if it is defined only as policy after the fact rather than situational judgment at the moment of action.

This matters even more as agentic AI scales. Deloitte reports that 74% of companies expect to use agentic AI at least moderately within two years, yet only 21% say they already have mature governance for autonomous agents. That gap is serious because agents do not merely recommend actions. They can take them directly. They can route work, trigger workflows, escalate issues, make updates, and interact with systems at speed.

I agree with the concern, but I would push the argument further. Governance is not only about what happens after an agent acts. It is also about whether the agent had enough organizational context to act correctly in the first place.

A registry of agents is not the same as a behavioral governance layer. A policy is not the same as a live authority map. A correct answer is not useful if it is routed to the wrong person, exposed to the wrong audience, or executed in the wrong sequence.

This is where many discussions of enterprise AI remain too narrow. They focus on model quality, knowledge retrieval, prompt engineering, or tool integration. All of those matter. But once AI begins to operate across real business environments, another question becomes unavoidable: does the system understand how the organization functions as a living system, not just as a collection of files and formal roles?

That question becomes especially important when work is ambiguous, political, or cross-functional. In those settings, success is rarely determined by content alone. It depends on timing, trust, influence, permission, overload, sequencing, and informal authority. Those are not edge issues. They are often the difference between adoption and resistance, execution and delay, correctness and failure.

This is also why Organizational Network Analysis matters again, but in a new form. Historically, ONA was often periodic, retrospective, and consulting-heavy. It was useful for diagnosing hidden influence or collaboration breakdowns, but it was rarely built into daily operations. What enterprise AI needs now is not a one-time map of collaboration. It needs a continuous layer that can detect trust, influence, overload, escalation paths, and decision patterns as the organization changes.

The real leap is treating ONA as continuous infrastructure rather than a one-time deliverable, turning behavioral signals into live, queryable context for AI systems.

That shift matters because enterprises do not stand still. Teams reorganize. Decision-makers change. Experts become overloaded. Informal power shifts. New initiatives create temporary hubs of influence. Legacy processes linger long after they stop reflecting how work really gets done. If AI is expected to operate inside this environment, it cannot rely only on static maps and historical documents. It needs a more dynamic understanding of the organization it is acting within.

In that sense, the next enterprise AI category may not simply be better copilots or more agent actions. It may be the infrastructure that makes organizational reality queryable at runtime.

Not just what the company says it is.

Not just what its documents record.

But how it actually functions.

That is also why I think the enterprise AI market is still missing an important category. Search platforms help organizations retrieve information. Foundation models help them reason across language. Workflow tools help automate process. HR and people analytics tools help analyze talent and engagement. But there is still a gap between these categories: the live organizational context layer that helps AI understand who matters, what is current, how decisions move, when escalation is needed, and where formal process diverges from practical reality.

Without that layer, AI can still be useful. But it will struggle to become reliable infrastructure for enterprise execution.

Deloitte’s report ultimately lands in a similar place from a different direction. It argues that organizations need to close the gap between access and activation, redesign work around AI, build governance before scale, and treat AI as foundational to how the organization operates. I agree. But I would add that redesigning work around AI requires redesigning AI around the organization as it actually behaves.

That is a different challenge from simply deploying more tools. It is not just a product question. It is an organizational intelligence question.

The companies that solve this well will not necessarily be the ones with the most pilots, the most aggressive branding, or the fastest rollouts. They will be the ones that understand that enterprise AI is not only a technical layer. It is an operating layer. And operating layers fail when they cannot read the real environment they are supposed to work inside.

That is the territory this newsletter will cover.

Not AI as theater.

Not AI as demo.

But AI as it is actually built, adopted, governed, and scaled inside real organizations.

Because the hardest part of enterprise AI is no longer getting a model to work.

It is getting the organization to work with it.

Next issue: OIL Dimension 1 — People. How can enterprise AI begin to understand trust, influence, and informal authority without relying on a static org chart or a six-month survey?

That last point is the one worth sitting with: visibility is not resolution. Agentic systems will surface the organizational debt — they won't retire it. The organizations that think they're buying a solution are actually purchasing a diagnostic they haven't agreed to act on yet.

The idea of the agentic enterprise raises a deeper question about what kind of leadership system sits underneath it. If agency becomes distributed, the coordination layer has to change as well.